AI is getting more personal — and that is exactly why smartphone makers are trying to keep more of it on your phone.

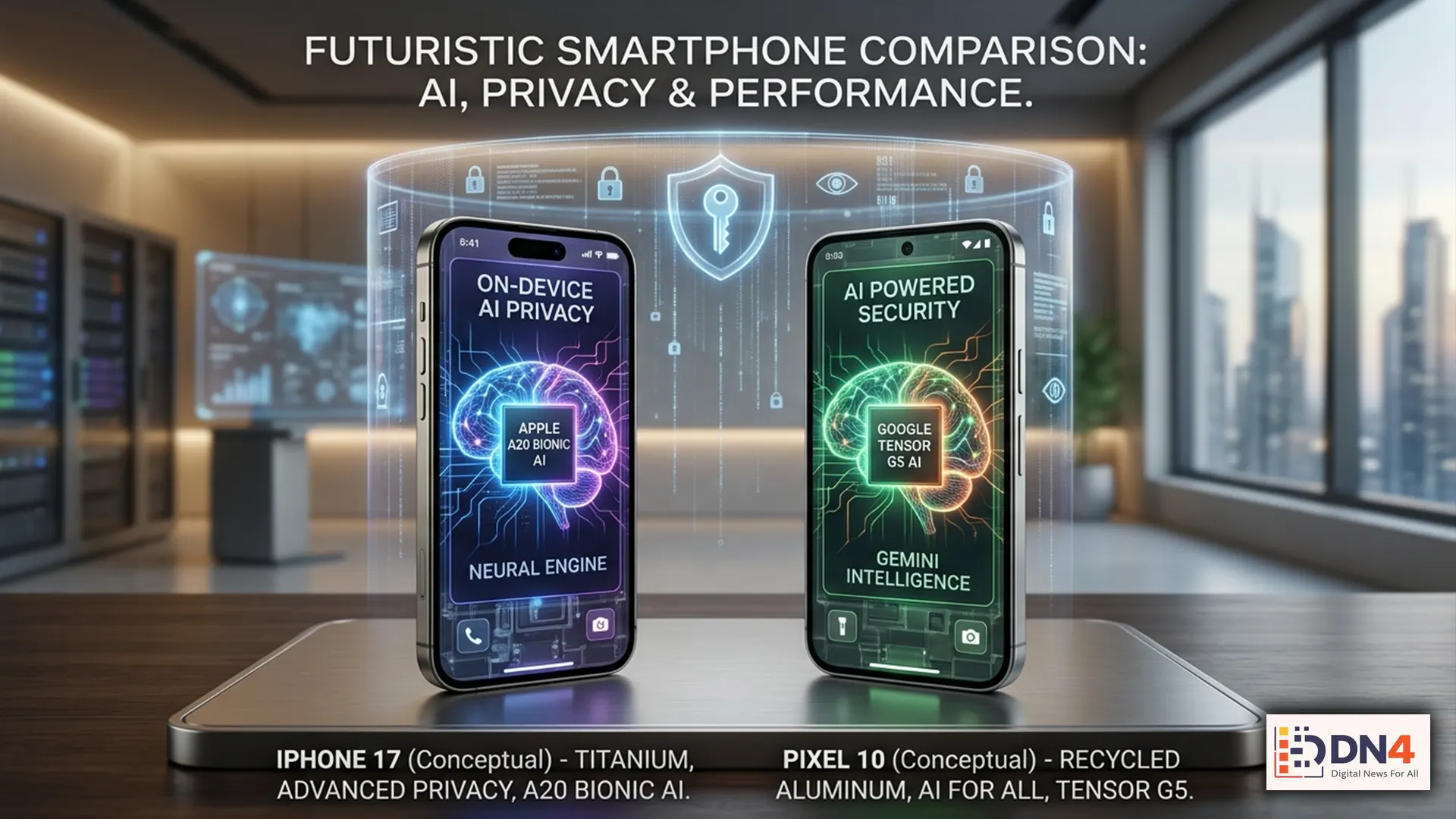

As the iPhone 17 and Pixel 10 shape up to be two of the most important phone launches in the next consumer tech cycle, one theme is becoming impossible to miss: both Apple and Google are increasingly prioritizing on-device AI.

That may sound like a technical footnote. It isn’t. It could end up being one of the most important shifts in how everyday users experience artificial intelligence — not just because it affects speed and features, but because it changes the privacy equation.

In simple terms, the next phase of mobile AI is not just about what your phone can do. It is about where the AI happens.

What “on-device AI” actually means

Most people have already used AI features on their phones without thinking much about where the processing happens. Sometimes a request is handled locally. Other times, your phone sends data to remote servers for heavier computation.

On-device AI means more of that intelligence runs directly on the phone itself — using its own chips, memory, and optimized machine learning models — rather than relying primarily on the cloud.

That matters because local processing can improve three things at once:

- Privacy — less sensitive data has to leave your device

- Speed — responses can feel faster and more immediate

- Reliability — some features can work even with weak or no internet

Apple has already framed this approach as central to Apple Intelligence, while Google has steadily expanded local AI capabilities across the Pixel ecosystem.

Why Apple and Google are both leaning into local privacy

The reason is simple: modern AI is powerful, but it is also data-hungry.

If AI features are expected to summarize your messages, rewrite your emails, understand your schedule, search your photos, transcribe calls, or act on your behalf, they need access to deeply personal context. That creates an obvious trust problem.

Apple’s public messaging around privacy has increasingly emphasized processing personal context securely, while Google has pushed a similar story through its Pixel AI experiences and on-device Gemini integrations.

That does not mean everything stays on the phone all the time. It doesn’t. But the strategic direction is clear: the more personal the task, the more valuable local processing becomes.

Why the iPhone 17 is likely to double down on this

Apple’s hardware strategy has been pointing toward this moment for years.

Its custom silicon roadmap — from the Neural Engine to increasingly powerful A-series chips — has been laying the groundwork for AI features that can run efficiently without always calling home to the cloud. Reports and analysis from outlets like The Verge and Bloomberg Technology have repeatedly highlighted Apple’s push to make AI feel native, personal, and tightly integrated into device-level workflows.

That matters for the iPhone 17 because Apple does not just want AI to be impressive — it wants it to feel trustworthy enough for everyday use.

And in Apple’s world, trust is a product feature.

If the iPhone 17 expands AI writing tools, notification intelligence, contextual assistance, or private app-level automation, it will likely do so by keeping as much of that processing local as possible before escalating more complex tasks elsewhere.

Why the Pixel 10 may be even more aggressive

Google has a different strategic advantage: it is arguably moving faster across the AI stack.

Where Apple tends to emphasize control and polish, Google often moves by shipping more ambitious AI capabilities earlier — especially when it can use Pixel hardware as a showcase for what Android AI can become.

That makes the Pixel 10 especially interesting. Google’s Tensor chip strategy has always been about more than benchmark bragging rights. It has been about enabling AI features that feel “ambient” and integrated — voice processing, image editing, call handling, summarization, and assistant behavior that happens in the background rather than as a separate app.

According to Google’s AI product updates, the company increasingly sees personal AI as something that should operate across everyday workflows. But that only scales if users believe it can do so without becoming invasive.

That is where on-device AI becomes more than a technical architecture. It becomes a trust layer.

What “local privacy” really means — and what it doesn’t

This is where the marketing gets slippery.

When companies say AI is “private” because it runs on-device, that does not automatically mean nothing is shared. It means some sensitive tasks can be handled locally, reducing how often your data needs to leave your phone.

That is a meaningful improvement. But it is not magic.

Users should still expect a hybrid model where:

- Simple or sensitive tasks run locally

- Heavier generative tasks may still rely on cloud infrastructure

- Permissions, model behavior, and account settings still matter

- Privacy depends partly on how well companies explain where data goes

In other words, local privacy is about minimizing exposure — not eliminating it completely.

Why this matters more than flashy AI demos

Consumers have already seen plenty of AI launch events filled with dazzling demos. But over time, the features that actually matter tend to be the ones people trust enough to use repeatedly.

That means the real winners in mobile AI may not be the brands with the loudest launch keynote. They may be the ones that make users comfortable enough to let AI interact with their most personal digital environments: messages, photos, notes, calendars, and voice.

If Apple and Google are both prioritizing on-device AI, it is because they understand something bigger: privacy is no longer separate from product design — it is now central to the AI user experience itself.

What this means for buyers in 2026

If you are choosing between the next generation of flagship phones, AI should not just be judged by how clever it sounds in a keynote.

You should also ask:

- What AI tasks work offline?

- What features stay on-device?

- Which tasks trigger cloud processing?

- How transparent are the privacy settings?

- How useful are the features in real daily life?

That is likely to become one of the defining smartphone buying questions of the next cycle — right alongside camera quality, battery life, and performance.

The iPhone 17 and Pixel 10 are not just racing to add more AI. They are racing to make AI feel safe enough to become normal.

That is why on-device AI matters.

It is not just a chip story. It is not just a software story. It is the foundation of how the next generation of personal AI may earn — or lose — user trust.

And in 2026, trust may be the most valuable smartphone feature of all.

#OnDeviceAI #iPhone17 #Pixel10 #AppleIntelligence #GoogleAI #SmartphonePrivacy #EdgeAI #MobileAI #AIPhones #TechExplained